'p2pEdit' in a nutshell

It is a distributed collaborative editing application which can be used by a group of persons to share their html documents and concurrently modify them. p2pEdit is a decentralized collaborative editor based on JXTA plateforme. The application enables users to form many groups and to join existing ones.

Welcome

Welcome to p2pEdit page, developped by Asma Cherif and Mohammed Aymen Baouab (former developper) at Cassis Team.

p2pEdit is a desktop collaboraitve editor that was extendend later for mobile devices by Moulay Driss Mechaoui.

Features

This tool allows any user to:

- Create a new group or join an existing group

- Edit the shared document concurrently to other members of the group

- Edit access rights to protect any part of the shared document

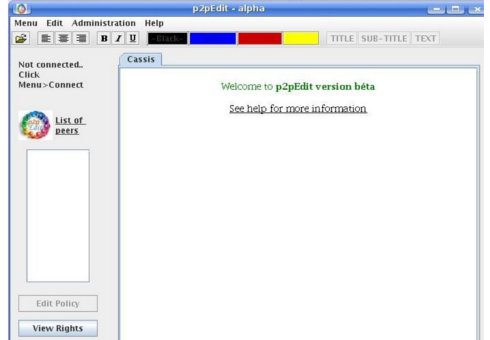

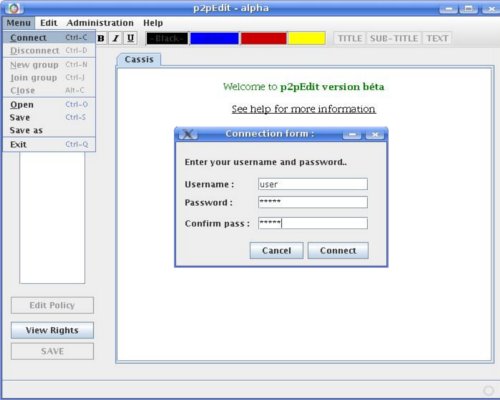

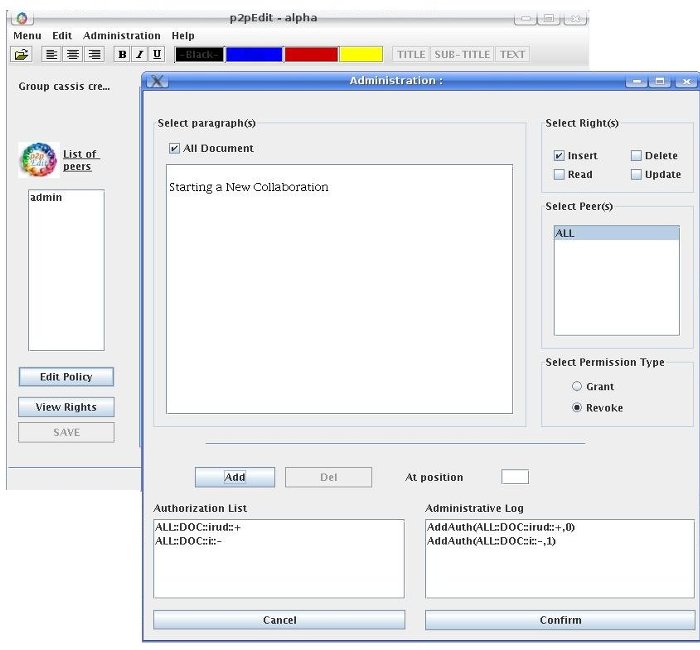

Screenshots

We present in this section some screen shots illustrating the working of our prototype.

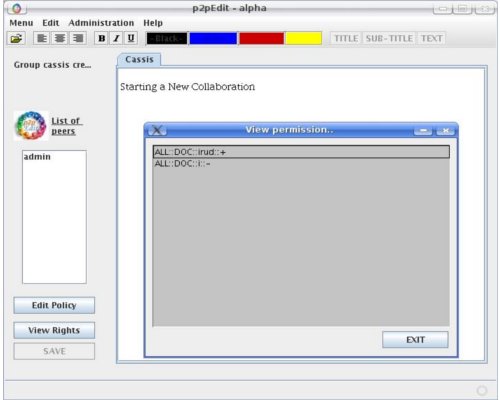

- Main window

- Connect Form

- Edit policy

- View Permissions

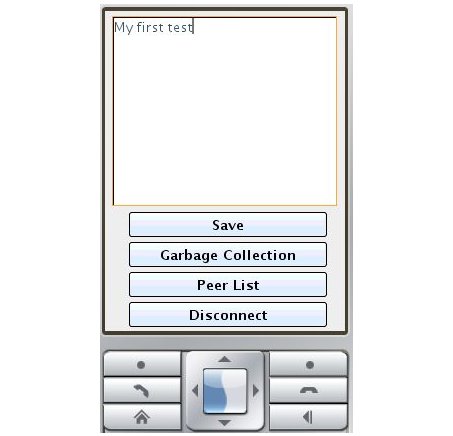

- CDC prototype

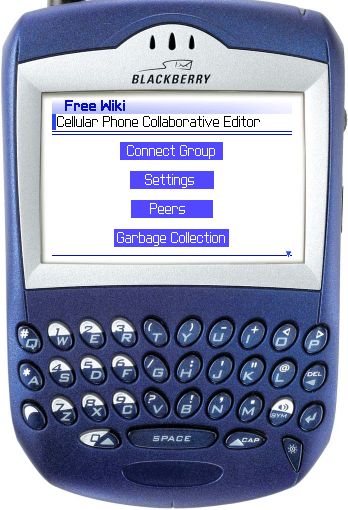

- CLDC prototype

Performance study

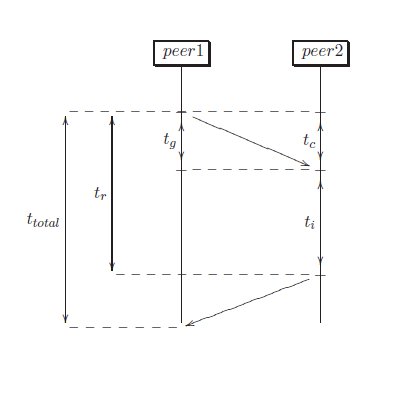

Experiments are necessary to understand what the asymptotic complexities mean when interactive constraints are present in the system. For our evaluation performance, we consider the following times:

- tg is the time required to generate a local request

- ti is the time to integrate a remote request

- tc is the time required to communicate a request to a peer throught network

- tr is the response time, we can obviously see that tr = tg + ti + tc

We have realized experimental tests for different values of the log and peers number to see the behaviour of our prototype. In the two experiments, we consider the worst case. In fact, as the worst case for canonization is obtained when including an insertion to a log that contains 100% delete operations, we initialize log to delete operations then we send an insert operation to many peers and measure the maximal response time. The policy contains 100 authorizations at most and can present redoundancies (the policy is not optimized).

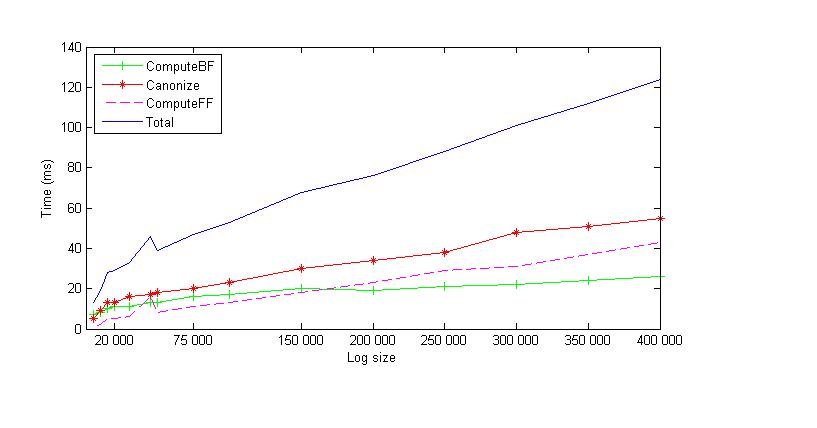

The following figure shows the response time for different log size values. This measurements reflect the times tg, ti, tc and their sum tr . The execution time falls within 100ms for all |H| < 300000 which is not achieved in SDT and ABT algorithms.

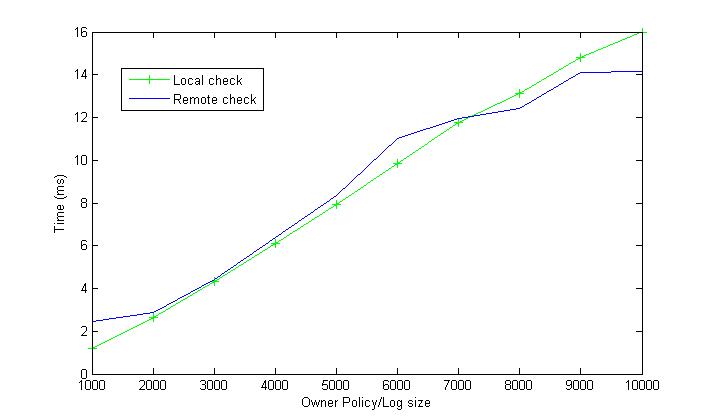

The second experiment measures the time required to check an operation against the policy. The figure presented below shows that the time required to check an operation is low (about 12 ms for a local operation and 16 ms for a remote one with an owner policy containing 10000 authorizations).

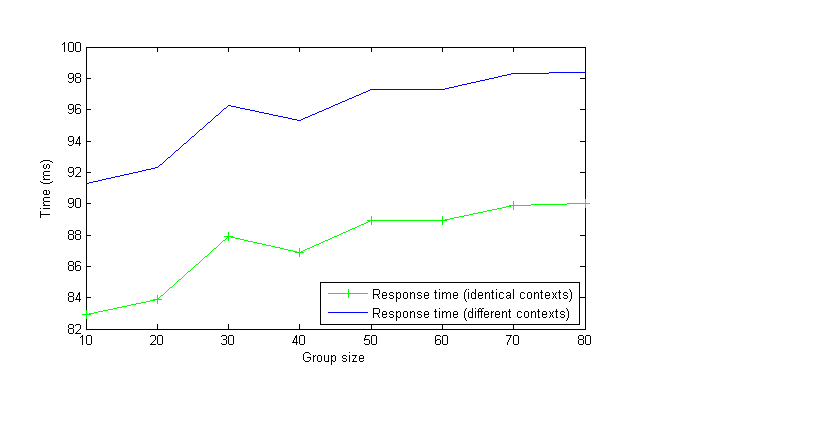

The third experiment measures the response time according to the number of peers. This experiment was done on the Grid5000 platform. Grid5000 is an experimental grid, whose goal is to ease research works on grid infrastructures. The resources are only shared by dozens of users and may be reserved and tailored for specific experiments. It is possible for a user to get root access on them and to deploy its own operat- ing system.

This platform is made of 13 clusters, located in 9 French cities and including 1047 nodes for 2094 CPUs. Within each cluster, the nodes are located in the same geographic area and communicate through Gigabyte Ethernet links. Communications between clusters are made through the french academic network (RENATER).

In this experiment, we fixed the log size to 150000 and measure the response time for different values of peers number (80 peers at most). The response time corresponds to the time required by an operation to be seen at a remote site. Two cases are presented in the curve, the first one concerns the case when the policy remains the same % (the context does not change at the sender and the receiver site, see Appendix) and the second one concerns the case when the policy changes. We can obviously see that for a cooperative log containing 150000 operations and a policy containing 80 owner policies where each one contains 5000 authorizations the response time is less than 100ms.